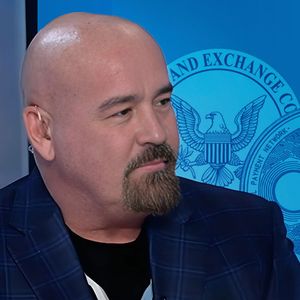

Coinbase CLO Uses Bump Stock Gun Case Against SEC, Here’s What it Means!

The post Coinbase CLO Uses Bump Stock Gun Case Against SEC, Here’s What it Means! appeared first on Coinpedia Fintech News Coinbase’s Chief Legal Offi...

I Ate Ramen That Was 'Imagined' in the Metaverse—Here's What That Means

Chef Esther Choi is bringing noodles from the metaverse to the real world. Decrypt tried them and spoke to KPR's creators about their vision.

New Survey Points To Increased Web3 Utility; However, People Don’t Know What It Really Means

Web3 adoption around the world is growing across all age demographics although many seem to be lost in the actual meaning of the world.

AVAX’s new milestone means little for investors because…

Avalanche has indeed been benefiting from healthy growth in the last few weeks. But just how much of this address activity is new or recurrent? DeFiLa...

What XRP’s decoupling means for you

XRP was witnessing more transactions in loss than profit, which was different from the rest of the altcoins

Latest Crypto News

Related News

-

What BNB Chain’s latest offering means for investors

BNB’s latest fast finality features brought enhanced security against double-spe...

Featured News

-

SEC Asks Courts for Permission To Hunt Down Binance CEO Ch...

The U.S. Securities and Exchange Commission (SEC) is asking courts for permissio...